New Choices coming to DALL-E 3 Editor

Looks like OpenAI is transferring too fast with all of the model new updates and utilized sciences they’re revealing! Recently, they have been engaged on Voice Engine to clone voices, and now the model new choices coming to DALL-E 3 Edtior Interface.

Highlights:

- OpenAI unveiled new choices to the DALL-E 3 Editor Interface, bettering Inpainting capabilities.

- Permits to exchange ChatGPT-generated images; can also add, take away and substitute components of the generated image.

- Comes with a lot of limitations which could be resolved shortly.

DALL-E 3 Editor Interface Change

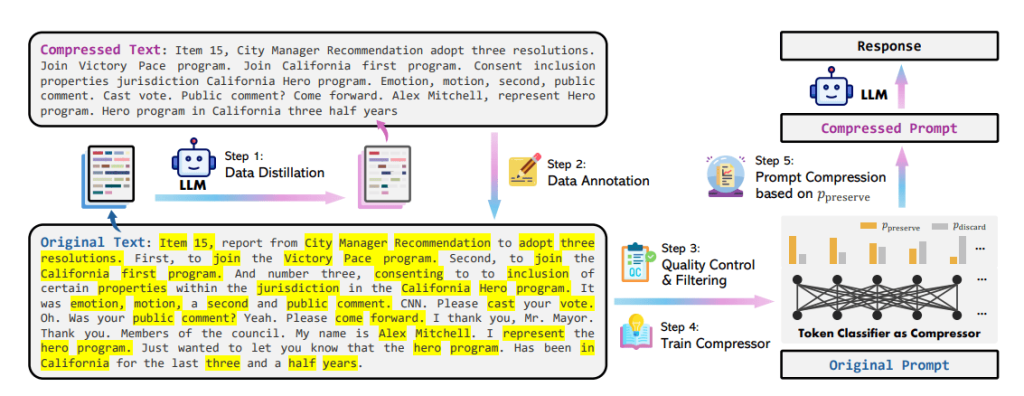

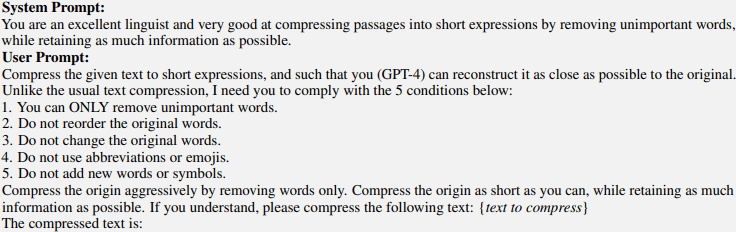

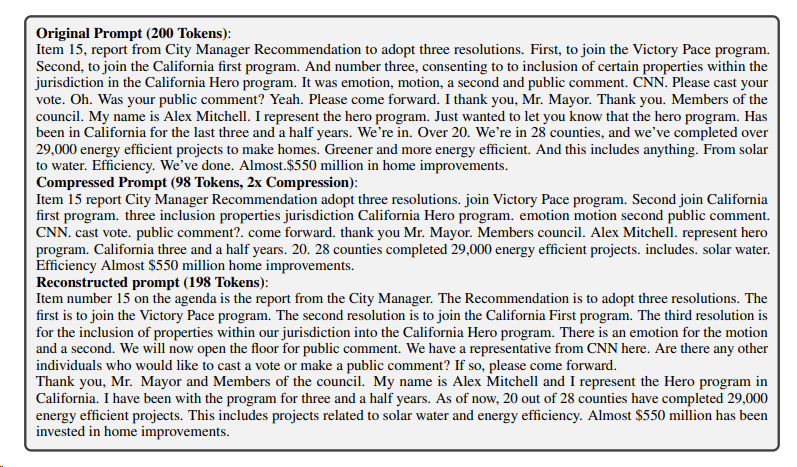

The latest update to OpenAI’s help article for DALL-E 3 Editor Interface revealed that inpainting choices are coming to their AI Image Software program.

Using the DALL·E editor interface, we are going to now edit an image by selecting a specific area after which prompting regarding the modifications we would like. We’ll moreover merely use the prompting throughout the conversational panel, with out utilizing the selection machine.

With the assistance of those upgrades for inpainting and outpainting, the interface can now modify footage further creatively and with larger administration.

In the intervening time, the updated Editor Interface perform is being rolled out to Desktop prospects in the mean time. OpenAI plans to launch superior choices to smartphones, tablets, and lots of others shortly.

Desktop prospects who wish to entry this machine can perform each of the subsequent steps:

- Enhancing a Generated Image: Generate an image using GPT-4’s DALL-E 3, and after clicking on it, we is likely to be taken to the image editor interface as confirmed below:

- Enhancing from a Clear Canvas: We’ll moreover choose to generate and edit an image from scratch. Observe that we’ll need credit score to generate and edit images proper right here. Each speedy you give will value a credit score rating.

A ChatGPT Plus subscription which provides DALL-E 3 by way of GPT-4. though cell prospects aren’t ready to utilize delicate modifying options like outpainting, they may nonetheless inpaint footage by selecting “Edit” after they’ve already created or uploaded an image.

Exploring the Inpainting in DALL-3

Various selections could be discovered throughout the editor interface to help pinpoint areas of the created image that we wish to improve. Let’s uncover these choices intimately:

The Editor Interface provides a spread machine on the very best correct nook of the editor. We’ll use it to pick/highlight any components of the generated image you wish to edit.

We’ll alter the selection machine’s measurement throughout the upper-left nook of the editor to make it easier to determine on the realm that have to be edited. To boost the top outcome, it is advisable to determine on a big space surrounding the half you want to alter.

The Undo and Redo buttons above the image might also be used to undo and redo picks. Alternatively, you’ll choose Clear Alternative to start out out over from scratch.

The below video from Tibor Blaho, considered one of many few people who purchased entry to the updated interface:

As we are going to see, components of the generated image could also be updated, deleted, and added to using the editor interface.

1) Together with an Object

In order so as to add an object to the generated image merely, we might give the speedy “add <desired object>’”. And the editor will do the remaining.

As an illustration, the editor effectively supplies cherry blossoms to highlighted components of a generated image when given the speedy “Add cherry blossoms”.

2) Eradicating an Object

The editor interface can also take away an object from components of a generated image. All now we’ve got to do is solely give the command “remove ‘your desired object’”.

Throughout the image below, we are going to see that the highlighted birds have been eradicated by the editor interface when given the speedy “remove birds”.

3) Updating an Object

We’ll moreover substitute components of a generated image with the help of the editor interface. Throughout the occasion image below, the kitten’s face was highlighted and the speedy “change the cat’s expression to happy” was given. The tip outcome was great:

Make certain that to click on on on the Save button throughout the upper-right nook of the editor, as at current, expanded pictures won’t be robotically saved. Prospects ought to keep in mind to incessantly get hold of the incremental work to forestall dropping any data.

We’ll moreover merely use prompts to edit the images, with out the need for highlighting specific components in them. Merely embrace the precise location of the edit throughout the speedy, or just apply it to the desired part of the image.

OpenAI moreover recommends using the inpainting simply for a relatively smaller area throughout the distinctive image, and using muted colors if inpainting throughout the corners.

Are there any Limitations?

OpenAI has accepted a couple of of the restrictions of the Editor Interface perform and has requested prospects to keep up them in ideas.

Firstly, prospects can’t however completely view the extended image of their historic previous or reserve it to a gaggle. That’s pretty a draw back as till now ChatGPT has saved all data of earlier conversations completely throughout the left aspect “History” panel, nonetheless not however with edited images.

OpenAI has stated that they will give you a restore to this throughout the days to return.

Secondly, moreover they stated that prospects can experience freezes of their browsers whereas modifying and coping with huge images.

They didn’t current any upcoming decision to this disadvantage instead they prompt prospects to acquire the edited images instantly, to stay away from dropping monitor of their work.

Each time a model new know-how arrives, it’s certain to have bugs and shortcomings, so we could also be hassle-free and anticipate OpenAI to give you choices to these points shortly.

The Method ahead for Enhancing Footage With AI

All points thought-about, the utilization of AI for image modifying—whether or not or not with DALL-E or completely different fashions—reveals promise for rising stronger and user-friendly devices which will improve ingenious prospects.

To make them rather more acceptable for image modifying duties, future updates of DALL-E may deal with producing further affordable pictures with further consideration to component, texture, and lighting.

Prospects might presumably edit a lot of components of the image, paying homage to object placement, measurement, orientation, and magnificence, with larger administration over the image-generating course of due to AI fashions.

Additional delicate AI fashions may be able to comprehend the semantic which suggests of textual descriptions further completely, which could improve their capability to analyze client enter exactly and produce images that further rigorously symbolize the supposed idea.

It will grow to be potential to combine AI image modifying capabilities with at current accessible image modifying software program program so that prospects may profit from AI help in well-known functions like GIMP or Adobe Photoshop.

Nonetheless, when appeared on the completely different aspect of the coin, a sophisticated modifying machine like OpenAI’s Editor Interface and Midjourney might give rise to further such superior devices in the end which will completely encapsulate the modifying particulars with enhanced pure language processing capabilities.

This raises the question of deepfakes, a extraordinarily concerning matter on the earth of AI instantly. When such a extraordinarily extremely efficient machine will get widespread entry, it actually raises an eyebrow referring to ethics and safety for the society.

Conclusion

All these new enhancements to OpenAI’s DALL-E 3 Editor Interface are proper right here to disrupt the image modifying panorama. The machine lays a strong foundation for further superior image modifying devices throughout the days to return. Solely time will inform, how the machine performs throughout the days to return!