GitHub’s New AI Software program Can Wipe Out Code Vulnerabilities Merely

Bugs, Beware, because the Terminator is right here for you! GitHub’s new AI-powered Code Scanning Autofix is without doubt one of the finest issues that builders will like to have by their facet. Let’s take a deeper take a look at it!

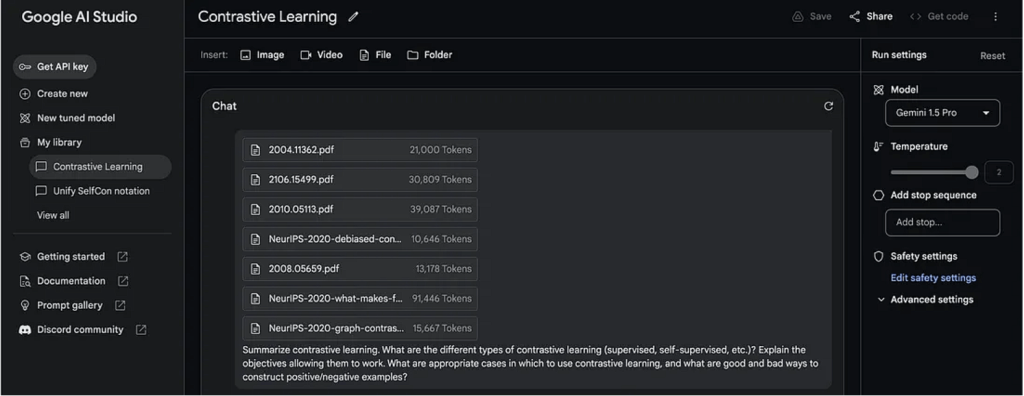

Highlights:

- GitHub’s Code Scanning Autofix makes use of AI to search out and repair code vulnerabilities.

- Will probably be out there in public beta for all GitHub Superior Safety prospects.

- It covers greater than 90% of alert varieties in JavaScript, Typescript, Java, and Python.

What’s GitHub’s Code Scanning Autofix?

GitHub’s Code Scanning Autofix is an AI-powered device that can provide code solutions, together with detailed explanations, to repair vulnerabilities within the code and enhance safety. It’ll counsel AI-powered autofixes for CodeQL alerts throughout pull requests.

It has been launched in public beta for GitHub Superior Safety prospects and is powered by GitHub Copilot- GitHub’s AI developer device and CodeQL- GitHub’s code evaluation engine to automate safety checks.

This Software can cowl 90% of alert varieties throughout JavaScript, TypeScript, Java, and Python. It gives code solutions that may resolve greater than two-thirds of recognized vulnerabilities with minimal or no modifying required.

Why We Want It?

GitHub’s imaginative and prescient for utility safety is an surroundings the place discovered means fastened. By emphasizing the developer expertise inside GitHub Superior Safety, groups are already attaining a 7x sooner remediation price in comparison with conventional safety instruments.

This new Code Scanning Autofix is a big development, enabling builders to considerably lower the effort and time required for remediation. It provides detailed explanations and code solutions to handle vulnerabilities successfully.

Regardless of functions remaining a major goal for cyber-attacks, many organizations acknowledge an rising variety of unresolved vulnerabilities of their manufacturing repositories. Code Scanning Autofix performs a vital function in mitigating this by simplifying the method for builders to handle threats and points through the coding part.

This proactive strategy won’t solely assist stop the buildup of safety dangers but additionally foster a tradition of safety consciousness and duty amongst growth groups.

Just like how GitHub Copilot alleviates builders from monotonous and repetitive duties, code scanning autofix will help growth groups in reclaiming time beforehand devoted to remediation efforts.

It will result in a lower within the variety of routine vulnerabilities encountered by safety groups and allow them to focus on implementing methods to safeguard the group amidst a fast software program growth lifecycle.

Find out how to Entry It?

These keen on collaborating within the public beta of GitHub’s Code Scanning Autofix can signal as much as the waitlist for AI-powered AppSec for developer-driven innovation.

Because the code scanning autofix beta is progressively rolled out to a wider viewers, efforts are underway to collect suggestions, tackle minor points, and monitor metrics to validate the efficacy of the solutions in addressing safety vulnerabilities.

Concurrently, there are endeavours to broaden autofix help to extra languages, with C# and Go arising very quickly.

How Code Scanning Autofix Works?

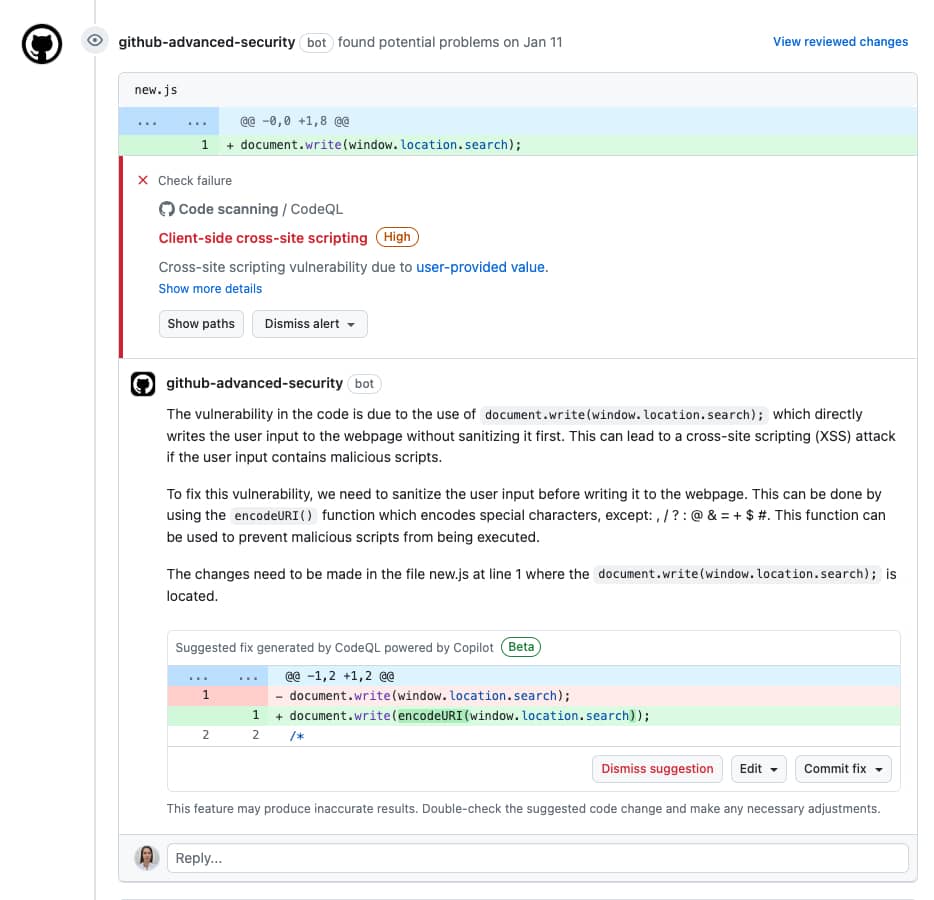

Code scanning autofix gives builders with advised fixes for vulnerabilities found in supported languages. These solutions embrace a pure language rationalization of the repair and are displayed straight on the pull request web page, the place builders can select to simply accept, edit, or dismiss them.

Moreover, code solutions supplied by autofix could prolong past alterations to the present file, encompassing modifications throughout a number of information. Autofix can also introduce or modify dependencies as mandatory.

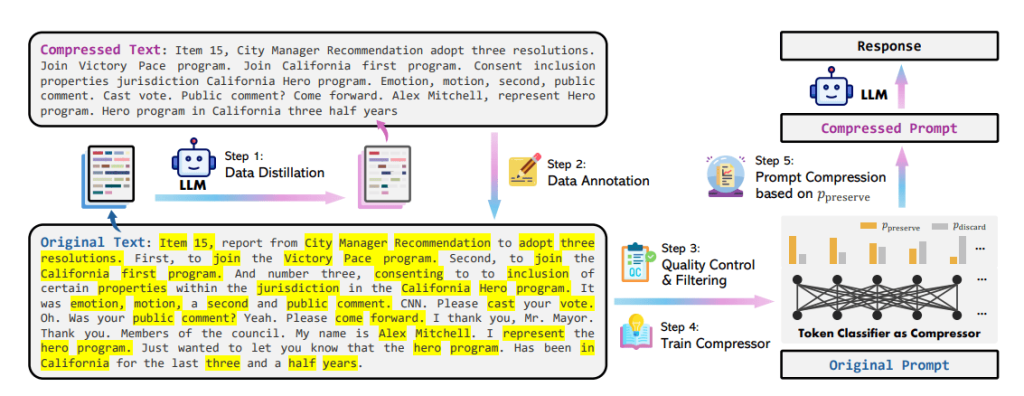

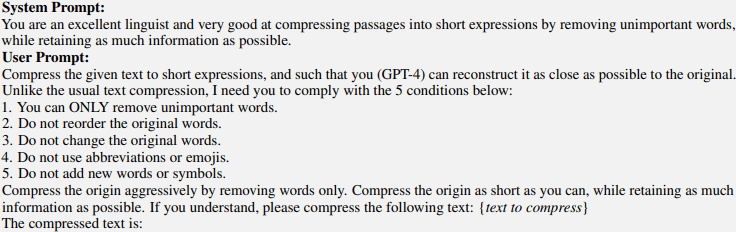

The autofix function leverages a big language mannequin (LLM) to generate code edits that tackle the recognized points with out altering the code’s performance. The method includes developing the LLM immediate, processing the mannequin’s response, evaluating the function’s high quality, and serving it to customers.

The YouTube video proven beneath explains how Code scanning autofix works:

Underlying the performance of code scanning autofix is the utilization of the highly effective CodeQL engine coupled with a mix of heuristics and GitHub Copilot APIs. This mix permits the era of complete code solutions to handle recognized points successfully.

Moreover, it ensures a seamless integration of automated fixes into the event workflow, enhancing productiveness and code high quality.

Listed here are the steps concerned:

- Autofix makes use of AI to offer code solutions and explanations through the pull request

- The developer stays in management by having the ability to make edits utilizing GitHub Codespaces or an area machine.

- The developer can settle for autofix’s suggestion or dismiss it if it’s not wanted.

As GitHub says, Autofix transitions code safety from being discovered to being fastened.

Inside The Structure

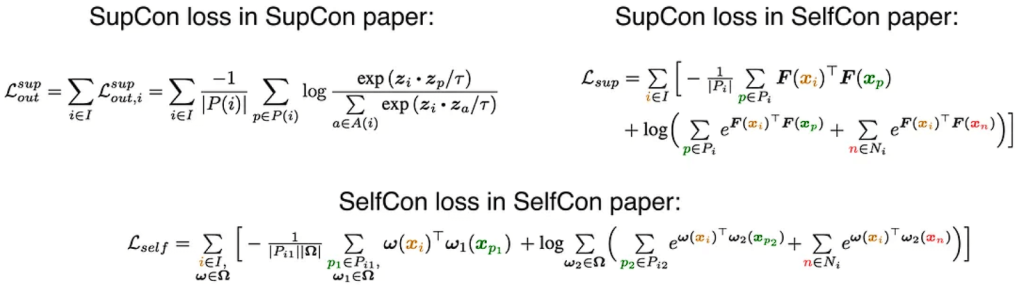

When a consumer initiates a pull request or pushes a commit, the code scanning course of proceeds as common, built-in into an actions workflow or third-party CI system. The outcomes, formatted in Static Evaluation Outcomes Interchange Format (SARIF), are uploaded to the code-scanning API. The backend service checks if the language is supported, after which invokes the repair generator as a CLI device.

Augmented with related code segments from the repository, the SARIF alert information types the idea for a immediate to the Language Mannequin (LLM) through an authenticated API name to an internally deployed Azure service. The LLM response undergoes filtration to forestall sure dangerous outputs earlier than the repair generator refines it right into a concrete suggestion.

The ensuing repair suggestion is saved by the code scanning backend for rendering alongside the alert in pull request views, with caching applied to optimize LLM compute assets.

The Prompts and Output construction

The know-how’s basis is a request for a Giant Language Mannequin (LLM) encapsulated inside an LLM immediate. CodeQL static evaluation identifies a vulnerability, issuing an alert pinpointing the problematic code location and any pertinent places. Extracted info from the alert types the idea of the LLM immediate, which incorporates:

- Normal particulars relating to the vulnerability kind, typically derived from the CodeQL query help page, supply an illustrative instance of the vulnerability and its remediation.

- The source-code location and contents of the alert message.

- Pertinent code snippets from numerous places alongside the circulate path, in addition to any referenced code places talked about within the alert message.

- Specification outlining the anticipated response from the LLM.

The mannequin is then requested to point out find out how to edit the code to repair the vulnerability. A format is printed for the mannequin’s output to facilitate automated processing. The mannequin generates Markdown output comprising a number of sections:

- Complete pure language directions for addressing the vulnerability.

- An intensive specification outlining the mandatory code edits, adhering to the predefined format established within the immediate.

- An enumeration of dependencies is required to be built-in into the venture, notably related if the repair incorporates a third-party sanitization library not at present utilized within the venture.

Examples

Beneath is an instance demonstrating autofix’s functionality to suggest an answer inside the codebase whereas providing a complete rationalization of the repair:

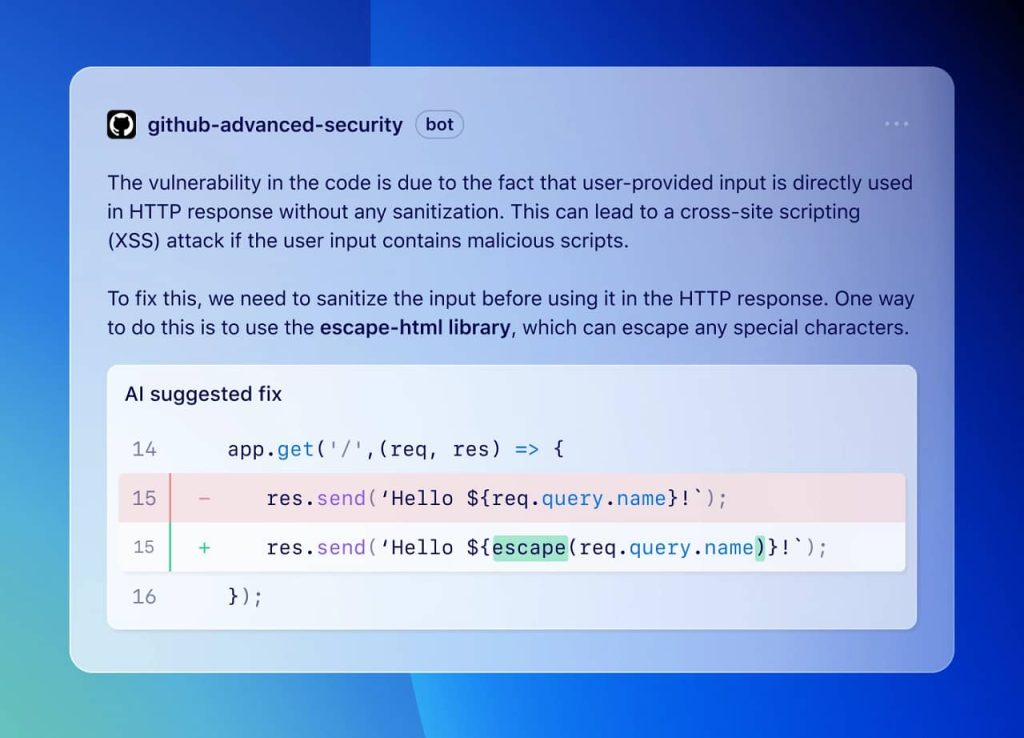

Right here is one other instance demonstrating the potential of autofix:

The examples have been taken from GitHub’s official documentation for Autofix.

Conclusion

Code Scanning Autofix marks an incredible growth in automating vulnerability remediation, enabling builders to handle safety threats swiftly and effectively. With its AI-powered solutions, and seamless integration into the event workflow, it may possibly empower builders to prioritize safety with out sacrificing productiveness!